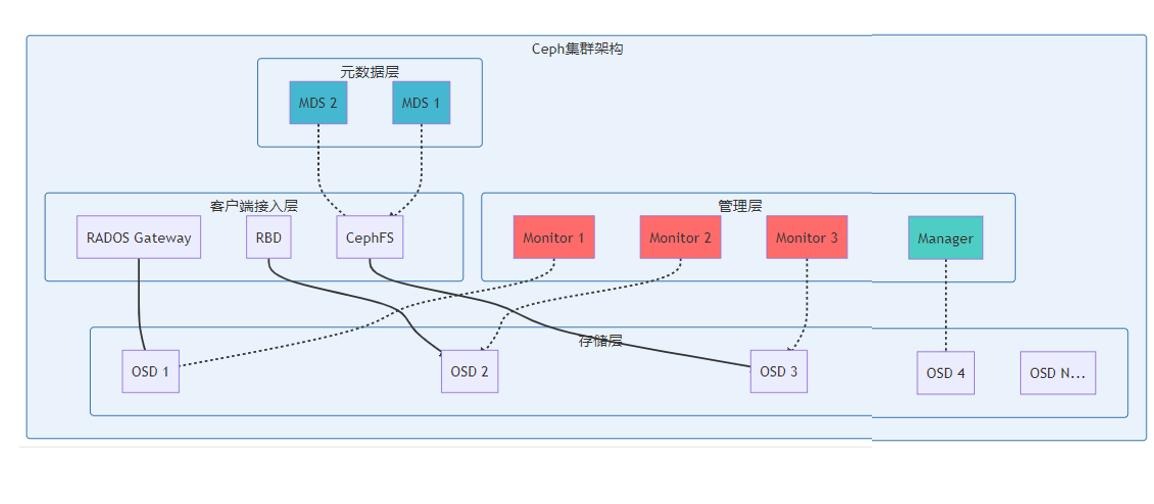

1. ceph概念

Ceph 是一个统一的、高可靠、高扩展的分布式存储系统,底层基于 RADOS(Reliable Autonomic Distributed Object Store)分布式对象存储,对外同时提供块存储(RBD)、文件系统(CephFS)、对象存储(RGW) 三种服务。

1.1 核心概念

1.1.1 RADOS

Ceph 底层核心,分布式对象存储系统,所有数据最终以 Object(对象) 形式存储在 OSD 上。

- 无中心架构,用 CRUSH 算法 做数据分布与定位

- 自带副本 / 纠删码、故障检测、自动恢复、数据重平衡

- 是 RBD/CephFS/RGW 三种服务的基础

1.1.2 Object(对象)

- Ceph 最小存储单元:数据 + 元数据 + 唯一 ID

- 大小通常 4MB/8MB/16MB(可配置)

- 文件 / 块 / 对象上层数据都会被切分为 Object 存入 RADOS

1.1.3 Pool(存储池)

- 逻辑分区:命名空间 + 数据策略集合

- 定义:副本数、PG 数量、CRUSH 规则、数据类型(数据 / 元数据)

- 不同服务(RBD/CephFS/RGW)建议用独立 Pool

1.1.4 PG(Placement Group)

- 中间逻辑层:一组 Object 的集合

- 作用:减少 OSD 元数据开销、提升数据分布均衡性

- 一个 PG 会映射到 N 个 OSD(副本数决定 N)

1.1.5 CRUSH 算法

- 伪随机数据分布算法:无中心查表

- 输入:Object ID + Pool ID + CRUSH Rule → 输出:目标 OSD 列表

- 支持机架 / 机房 / 主机级故障域隔离

1.2 Ceph 核心组件

1.2.1 Monitor(MON)— 集群大脑

作用

- 维护集群全局状态与一致性(Paxos 仲裁)

- 存储 4 张 Map:Mon Map、OSD Map、PG Map、CRUSH Map

- 集群认证、服务发现、健康检查、配置管理

- 保证无单点故障:通常部署 3/5 奇数节点

关键参数与调整影响

mon_clock_drift_allowed:时钟偏差容忍度(默认 0.05s)- 调大:容忍更大时钟差 → 集群更稳,但一致性风险上升

- 调小:时钟更严格 → 易出现 HEALTH_WARN/ERR,服务中断

mon_osd_down_out_interval:OSD 下线多久标记为 out(默认 300s)- 调大:故障判定变慢 → 恢复慢,但抖动减少

- 调小:快速判定故障 → 立即触发恢复,IO 波动大

mon_paxos_proposer_timeout:Paxos 提案超时- 调大:网络差时更稳 → 收敛慢,配置更新延迟

- 调小:收敛快 → 网络抖动易失败,集群不稳定

1.2.2 OSD(Object Storage Daemon)— 数据存储核心

作用

- 真正存储数据对象(BlueStore/FileStore)

- 数据副本同步、故障恢复、数据回填、重平衡

- 心跳上报状态、响应客户端 IO

- 每个 OSD 通常对应一块物理盘

关键参数与调整影响

osd_pool_default_size:默认副本数(默认 3)- 调大:数据更安全 → 可用容量下降、网络 / IO 负载上升

- 调小:容量提升、性能提升 → 可靠性下降(丢盘风险高)

osd_recovery_max_active:单 OSD 最大并发恢复(默认 3)- 调大:恢复更快 → 业务 IO 延迟飙升、集群不稳定

- 调小:业务优先 → 恢复慢、数据冗余不足风险

osd_op_threads:OSD 工作线程(默认 1)- 调大:高并发 IO 更快 → CPU 占用高、上下文切换开销大

- 调小:CPU 省 → 高并发时队列阻塞、延迟高

bluestore_compression_algorithm:BlueStore 压缩算法(lz4/zstd)- 开启 lz4:节省空间(1.2–2 倍)、低 CPU 开销

- 开启 zstd:压缩比更高 → 读写延迟增加、CPU 高

osd_memory_target:OSD 内存上限(默认 4GB)- 调大:缓存更大、读性能更好 → 内存占用高、OOM 风险

- 调小:内存省 → 缓存命中率低、读性能差

pg_num / pgp_num:PG 数量- 太少:数据不均、OSD 热点、恢复慢

- 太多:内存暴涨、心跳压力大、扩缩容极慢

- 推荐:

OSD总数 × (100–300) / 副本数 / 池数

1.2.3 Manager(MGR)— 集群管家

作用

- 监控指标、Dashboard、REST API、CLI 增强

- 负载均衡、自动化调度、状态统计

- 模块:Prometheus、Zabbix、Balancer、Dashboard 等

调整影响

- 启用

mgr balancer:自动数据均衡 → 后台 IO 增加、业务延迟微升 - 关闭 MGR:Dashboard / 监控失效,但不影响数据 IO

1.2.4 RGW(RADOS Gateway)— 对象存储网关

作用

- 提供 S3/Swift 兼容的对象存储接口

- 用户 / 桶 / 权限管理、配额、地域复制

调整影响

rgw_thread_pool_size:RGW 线程数- 调大:并发更高 → CPU / 内存占用上升

rgw_max_concurrent_requests:最大并发请求- 调大:高并发强 → 队列长、延迟上升

1.2.5 RBD(RADOS Block Device)— 块存储

作用

- 提供分布式块设备(云硬盘、虚拟机磁盘)

- 快照、克隆、增量备份、扩容

调整影响

rbd_cache:开启客户端缓存- 开启:随机读性能大幅提升 → 数据一致性风险(断电丢缓存)

rbd_cache_size:客户端缓存大小- 调大:读性能更好 → 客户端内存占用高

1.3 CephFS 文件系统专用组件

CephFS = MDS(元数据) + 数据 Pool(RADOS),提供 POSIX 兼容分布式文件系统Ceph。

1.3.1 MDS(Metadata Server)— 元数据服务器

作用

- 管理文件系统命名空间:目录、文件名、权限、inode、属性

- 处理

open/stat/readdir/rename/unlink等元数据操作 - 元数据缓存、分布式分片、负载均衡

- 数据 IO 不经过 MDS(直接客户端 ↔ OSD)

关键参数与调整影响

mds_cache_size:MDS 元数据缓存(默认 1GB)- 调大:元数据操作极快 → 内存暴涨、大目录卡顿风险

- 调小:内存省 → 缓存频繁淘汰、stat/readdir 慢

mds_max_completed_requests:完成请求缓存数- 调大:重入 / 重试更快 → 内存占用高

mds_beacon_interval:MDS 心跳间隔(默认 1s)- 调大:网络 / CPU 省 → 故障检测慢、切换慢

- 调小:故障切换快 → 心跳开销大

mds_standby_for_rank:Standby 热备- 开启:MDS 故障秒级切换 → 额外资源占用

mds_cache_mid:缓存淘汰阈值- 调大:缓存更积极 → 内存占用高、GC 频繁

1.3.2 CephFS 双 Pool 结构

- fs_metadata Pool:存 inode、目录、权限等(低容量、高 IOPS、低延迟)

- 建议:SSD、副本 3、小 PG 数量

- fs_data Pool:存文件内容(大容量、高吞吐)

- 建议:HDD/SSD、副本 3 / 纠删码、PG 合理

1.4 组件关系与整体架构

- MON:维护集群地图与一致性

- OSD:存数据、副本、恢复、IO

- MGR:监控、Dashboard、调度

- MDS:仅 CephFS 用,管元数据

- RBD/RGW/CephFS:上层接口,基于 RADOS

1.5 调整原则总结

- MON:优先稳定,时钟 / 超时不要激进

- OSD:业务 IO 与恢复平衡,恢复参数高峰期调低

- PG:一次算好、分批调整,严禁频繁改

- MDS:内存给够,元数据性能决定文件系统体验

- 副本数:生产 3 副本,容量与安全平衡

- 压缩:BlueStore 开 lz4,空间与性能平衡

2. 安装命令

[root@node01 ~]# dnf search release-ceph

[root@node01 ~]# dnf install --assumeyes centos-release-ceph-squid

[root@node01 ~]# dnf install --assumeyes cephadm

[root@node01 ~]# CEPH_RELEASE=19.2.3

[root@node01 ~]# curl --silent --remote-name --location https://download.ceph.com/rpm-${CEPH_RELEASE}/el9/noarch/cephadm

[root@node01 ~]# chmod +x cephadm

[root@node01 ~]# curl -o /etc/pki/rpm-gpg/RPM-GPG-KEY-EPEL-9 https://dl.fedoraproject.org/pub/epel/RPM-GPG-KEY-EPEL-9

[root@node01 ~]# ./cephadm add-repo --release 19.2.3

[root@node01 ~]# ./cephadm install

[root@node01 ~]# yum -y install python3-jinja2 python3-yaml

[root@node01 ~]# cephadm bootstrap --mon-ip 192.168.173.201

Ceph Dashboard is now available at:

URL: https://node01:8443/

User: admin

Password: 5p176ukk0r

Enabling client.admin keyring and conf on hosts with "admin" label

[root@node01 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub node02

[root@node01 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub node03

[root@node01 ~]# yum -y install ceph-common-19.2.3

[root@node01 ~]# ceph orch host label add node01 --labels _admin

[root@node01 ~]# ceph orch host add node02 192.168.173.202 --labels osd

[root@node01 ~]# ceph orch host add node03 192.168.173.203 --labels osd

[root@node01 ~]# ceph orch daemon add osd node01:/dev/nvme0n2

[root@node01 ~]# ceph orch daemon add osd node02:/dev/nvme0n2

[root@node01 ~]# ceph orch daemon add osd node03:/dev/nvme0n2

# 设置监视器,默认会加入,也可以手动添加

[root@node01 ~]# ceph orch daemon add mon node02

[root@node01 ~]# ceph orch daemon add mon node03

[root@node01 ~]# ceph mon stat

[root@node01 ~]# ceph orch ps --daemon-type mon

3. 主机管理命令

- 添加节点到ceph集群

# 基础添加(指定节点名、IP)

ceph orch host add node02 192.168.1.102

# 可选:添加时指定标签(用于分组管理,如 osd节点、mon节点)

ceph orch host add node03 192.168.1.103 --labels osd,mon- 节点信息查看

# 基础查看(节点名、IP、标签、状态)

ceph orch host ls

# 格式化输出(更清晰,适合脚本解析)

ceph orch host ls --format json-pretty- 查看节点上运行的服务

# 查看单个节点的服务(如 node02)

ceph orch ps --host node02

# 查看所有节点的服务分布

ceph orch ps- 查看节点磁盘信息(osd相关)

# 列出节点的可用磁盘(用于部署OSD)

ceph orch device ls node02

# 查看磁盘详情(含健康状态)

ceph orch device ls node02 --detail- 节点维护模式

# 进入维护模式

ceph orch host maintenance enter node02

# 退出维护模式(维护完成后)

ceph orch host maintenance exit node02- 修改节点标签

# 添加标签(如给node02添加osd标签)

ceph orch host label add node02 osd

# 删除标签(如移除node02的mon标签)

ceph orch host label rm node02 mon

# 查看节点标签

ceph orch host ls --host node02- 更新节点上的ceph版本

# 升级单个节点的所有服务

ceph orch upgrade start --host node02 --image quay.io/ceph/ceph:v20.2.0

# 升级集群所有节点(全量升级)

ceph orch upgrade start --image quay.io/ceph/ceph:v20.2.0- 删除节点

# 1. 进入维护模式(迁移服务)

ceph orch host maintenance enter node02

# 2. 移除节点(删除集群中的节点记录)

ceph orch host rm node02

# 3. 可选:清理节点上的Ceph残留(在node02本地执行)

cephadm rm-cluster --fsid $(ceph fsid) --force

# 强制移除节点(紧急场景,慎用)

ceph orch host rm node02 --force4. osd管理命令

- osd创建

# 指定节点+磁盘创建(如 node01 的 nvme0n2)

ceph orch daemon add osd node01:/dev/nvme0n2

# 批量创建(为节点所有可用磁盘创建 OSD)

ceph orch apply osd --all-available-devices --placement="node01,node02"- 验证创建结果

ceph osd ls # 列出所有 OSD 编号(如 osd.0、osd.1)

ceph osd tree # 查看 OSD 拓扑(节点-OSD 对应关系、状态)- 基础状态查看

# 查看所有 OSD 状态(up/down、in/out、权重等)

ceph osd status

# 简写:ceph osd stat

# 查看 OSD 详细拓扑(含节点、磁盘、状态、权重)

ceph osd tree

# 查看单个 OSD 信息(如 osd.0)

ceph osd info osd.0- 性能/健康查看

# 查看 OSD 性能指标(吞吐量、IOPS、延迟)

ceph osd perf

# 查看 OSD 磁盘使用情况(容量、使用率)

ceph osd df

# 格式化输出(更清晰)

ceph osd df --format json-pretty- 查看osd对应的磁盘/进程

# 查看 OSD 进程分布(节点、PID、磁盘路径)

ceph orch ps --daemon-type osd

# 查看节点上的 OSD 磁盘映射(如 node01)

ceph orch device ls node01 --detail | grep -A5 "osd."- osd维护操作

# 先将 OSD 标记为 out,Ceph 会自动迁移该 OSD 上的数据到其他 OSD,避免数据丢失

# 标记单个 OSD 为 out(如 osd.0)

ceph osd out osd.0

# 批量标记(如 osd.0、osd.1)

ceph osd out osd.0 osd.1

# 停止 OSD(如 osd.0)

ceph orch daemon stop osd.0

# 启动 OSD

ceph orch daemon start osd.0

# 重启 OSD

ceph orch daemon restart osd.0

# 重启节点上所有 OSD(如 node01)

ceph orch daemon restart osd --host node01

# OSD 标记为「in」(维护完成后恢复)

ceph osd in osd.0

# # 设置 OSD 权重(如 osd.0 权重为 1.0,默认 1.0)

ceph osd crush set osd.0 1.0 host=node01

# 查看 OSD 权重

ceph osd crush tree

# 安全删除 OSD(推荐)

# 步骤 1:标记 OSD 为 out(迁移数据)

ceph osd out osd.0

# 步骤 2:停止 OSD 进程

ceph orch daemon stop osd.0

# 步骤 3:从集群中删除 OSD

ceph osd rm osd.0

# 步骤 4:删除 OSD 认证密钥(可选,清理残留)

ceph auth del osd.0

# 步骤 5:从 CRUSH 映射中移除 OSD

ceph osd crush rm osd.0

# 强制删除 OSD(紧急场景,慎用)

ceph osd rm osd.0 --force- OSD 故障排查常用命令

# 查看 OSD 错误日志

ceph logs --name osd.0

# 检查 OSD 心跳状态

ceph osd ping osd.0

# 查看集群健康状态(重点关注 OSD 相关告警)

ceph health detail5. 存储池相关命令

- 创建存储池

# 先创建 RBD 存储池(若未创建)

ceph osd pool create rbd 128 128 # 128为PG/PGP数量,测试环境可设小些

rbd pool init rbd # 初始化 RBD 存储池5.1 rbd块存储

- 创建 RBD 映像

# 基础创建:创建名为 demo.img、大小 10GB 的映像(默认池 rbd)

rbd create demo.img --size 10G

# 指定存储池创建:在 pool1 池中创建 test.img,大小 20GB

rbd create pool1/test.img --size 20G

# 高级创建:指定块大小(4M)、特性(如开启分层)

rbd create demo2.img --size 5G --object-size 4M --features layering- 查看 RBD 映像

# 查看默认池(rbd)所有映像

rbd ls

# 查看指定池的映像

rbd ls pool1

# 查看映像详细信息(如 demo.img)

rbd info demo.img

# 查看指定池的映像信息

rbd info pool1/test.img

# 查看映像占用空间(含快照)

rbd du demo.img- 修改映像大小(扩容 / 缩容)

# 扩容:将 demo.img 扩容到 15GB

rbd resize demo.img --size 15G

# 缩容:将 demo.img 缩容到 8GB(需先卸载并确保数据已迁移,慎用)

rbd resize demo.img --size 8G --allow-shrink

# 查看扩容/缩容结果

rbd info demo.img- 删除 RBD 映像

# 删除默认池的 demo.img

rbd rm demo.img

# 删除指定池的映像

rbd rm pool1/test.img

# 强制删除(映像被占用时,慎用)

rbd rm demo.img --force- 创建快照

# 为 demo.img 创建名为 snap1 的快照

rbd snap create demo.img@snap1

# 一次性创建带时间戳的快照(便于管理)

rbd snap create demo.img@$(date +%Y%m%d_%H%M%S)- 查看快照

# 查看 demo.img 的所有快照

rbd snap ls demo.img

# 查看快照详细信息

rbd info demo.img@snap1- 恢复快照

# 将 demo.img 恢复到 snap1 快照状态(需先卸载映像)

rbd snap rollback demo.img@snap1- 删除快照

# 删除单个快照

rbd snap rm demo.img@snap1

# 删除所有快照(批量清理)

rbd snap purge demo.img- 快照设置为保护状态,方便后续克隆

# 为快照 snap1 开启保护(防止快照被删除)

rbd snap protect demo.img@snap1

# 取消保护(如需删除快照)

rbd snap unprotect demo.img@snap1- 创建克隆映像

# 从 demo.img@snap1 克隆出 new_demo.img

rbd clone demo.img@snap1 new_demo.img

# 指定存储池克隆

rbd clone demo.img@snap1 pool1/clone_demo.img- 查看克隆关系

# 查看映像的父快照(确认克隆来源)

rbd info new_demo.img | grep parent- 扁平化克隆(脱离父快照,独立存储)

# 扁平化 new_demo.img

rbd flatten new_demo.img

# 查看扁平化结果(parent 字段消失)

rbd info new_demo.img- 映射映像到本地设备

# 映射 demo.img 到本地(生成 /dev/rbd0 设备)

rbd map demo.img

# 指定用户/密钥映射(非 root 用户)

rbd map demo.img --name client.admin --keyring /etc/ceph/ceph.client.admin.keyring

# 查看映射关系

rbd showmapped- 格式化并挂载映像

# 格式化映像(ext4 文件系统)

mkfs.ext4 /dev/rbd0

# 挂载到 /mnt/rbd 目录

mount /dev/rbd0 /mnt/rbd- 卸载并取消映射

# 先卸载

umount /mnt/rbd

# 取消映射

rbd unmap /dev/rbd0

# 强制取消映射(设备被占用时)

rbd unmap /dev/rbd0 --force5.1.1 iscsi挂载

- 纯 targetcli 实现 RBD → iSCSI(无需 ceph-iscsi)

dnf install -y targetcli python3-kmod python3-pyparsing

# 映射 demo.img(默认 rbd 池)

rbd map demo.img

# 验证映射结果(输出 /dev/rbd0 即成功)

rbd showmapped

# 进入交互式界面

targetcli- 进入 targetcli 后执行:

# 1. 创建块存储后端(关联 RBD 映射的设备 /dev/rbd0)

/backstores/block create demo_iscsi /dev/rbd0

# 2. 创建 iSCSI 目标名称(格式固定,可自定义后半段)

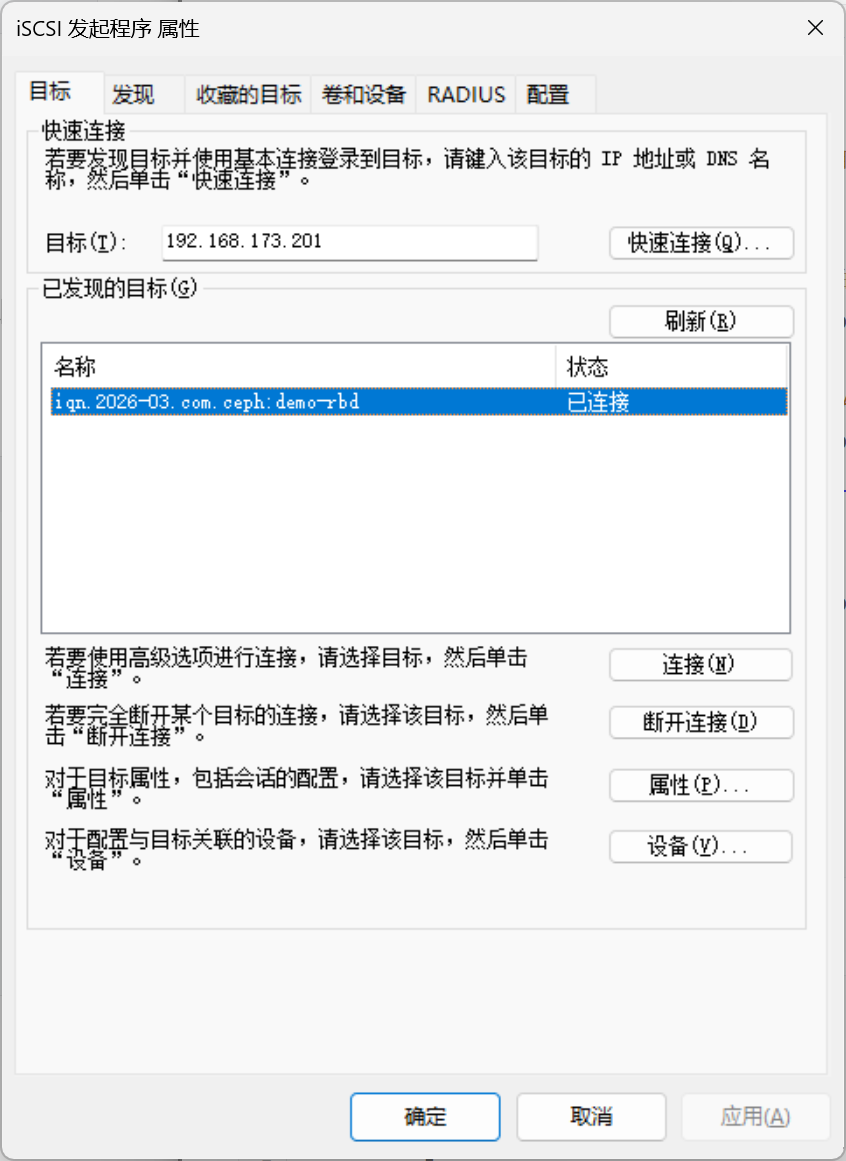

/iscsi create iqn.2026-03.com.ceph:demo-rbd

# 3. 为 iSCSI 目标添加 LUN(关联第一步的块存储)

/iscsi/iqn.2026-03.com.ceph:demo-rbd/tpg1/luns create /backstores/block/demo_iscsi

# 4. 关闭认证(测试环境简化,生产可加 CHAP)

/iscsi/iqn.2026-03.com.ceph:demo-rbd/tpg1 set attribute authentication=0 demo_mode_write_protect=0 generate_node_acls=1 cache_dynamic_acls=1

# 5. 允许所有客户端访问(测试用,生产可指定客户端 IQN)

/iscsi/iqn.2026-03.com.ceph:demo-rbd/tpg1/acls create ANY

# 6. 保存配置(重启后不丢失)

saveconfig

# 7. 退出

exit- 启动并配置 iSCSI 服务自启

# 启动 target 服务(即 iSCSI 目标服务)

systemctl enable --now target

# 验证服务状态(active (running) 即正常)

systemctl status target- windows使用iscsi发起程序连接iscsi

- 关键验证与维护命令

# 查看 iSCSI 配置(确认目标和 LUN 已创建)

targetcli ls

# 查看当前 iSCSI 连接(Windows 连接后可看到会话)

targetcli /iscsi/iqn.2026-03.com.ceph:demo-rbd/tpg1/connections ls

# 停止/重启 iSCSI 服务

systemctl stop target

systemctl restart target

# 取消 RBD 映射(如需卸载)

rbd unmap /dev/rbd06. 对象存储

- Ceph 对象存储依赖 RGW 守护进程和专用存储池,先完成基础部署

# 1. 创建 RGW 所需存储池(默认池名,也可自定义)

ceph osd pool create default.rgw.buckets.data 8 8

ceph osd pool create default.rgw.control 8 8

ceph osd pool create default.rgw.meta 8 8

ceph osd pool create default.rgw.log 8 8

# 2. 创建 RGW 服务,指定部署节点为 node01,服务名自定义为 rgw.node01

ceph orch daemon add rgw node01:rgw.node01

# 查看 RGW 服务状态(确认服务已创建)

ceph orch ls | grep rgw

# 查看 RGW 守护进程(确认 node01 上已启动)

ceph orch ps --daemon-type rgw- 重启 / 停止 / 删除 RGW 网关

# 重启指定 RGW 进程(如 rgw.node01)

ceph orch daemon restart rgw.node01

# 停止 RGW 进程

ceph orch daemon stop rgw.node01

# 删除 RGW 进程(如需重新部署)

ceph orch daemon rm rgw.node01- 配置 RGW 端口 / 域名(自定义)

# 修改 RGW 监听端口(默认 8080,改为 80)

ceph config set rgw rgw_frontends "civetweb port=80"

# 配置 RGW 域名(如 s3.ceph.com)

ceph config set rgw rgw_dns_name s3.ceph.com

# 重启 RGW 使配置生效

ceph orch daemon restart rgw.node01- 创建 S3 协议用户(最常用)

# 创建名为 s3-user1 的用户,指定所属租户(默认 default)

radosgw-admin user create --uid="s3-user1" --display-name="S3 User 1"

# 输出示例(关键信息:access_key/secret_key,用于客户端连接):

# "access_key": "554Z2786E8824792980C",

# "secret_key": "87654321ABCDEFGHIJKLMNOPQRSTUVWXYZ"- 查看用户信息

# 查看单个用户详情

radosgw-admin user info --uid="s3-user1"

# 列出所有用户

radosgw-admin user list

# 查看用户的桶/配额等统计信息

radosgw-admin user stats --uid="s3-user1"- 修改用户信息(重置密钥 / 显示名)

# 重置用户的 S3 密钥

radosgw-admin key create --uid="s3-user1" --key-type="s3" --gen-access-key --gen-secret-key

# 修改用户显示名

radosgw-admin user modify --uid="s3-user1" --display-name="New S3 User 1"- 删除用户

# 先删除用户的所有桶(否则删除失败)

radosgw-admin bucket rm --bucket="user1-bucket" --uid="s3-user1"

# 删除用户

radosgw-admin user rm --uid="s3-user1"- 创建桶(两种方式)

- 方式 1:通过 radosgw-admin(服务端)

radosgw-admin bucket create --bucket="test-bucket" --uid="s3-user1"- 方式 2:通过 s3cmd(客户端,需先安装)

# 安装 s3cmd

dnf install -y s3cmd

# 配置 s3cmd(填入 access_key/secret_key 和 RGW 地址)

s3cmd --configure

# 创建桶

s3cmd mb s3://test-bucket- 查看桶信息

# 列出所有桶

radosgw-admin bucket list

# 查看单个桶详情

radosgw-admin bucket info --bucket="test-bucket"

# 查看桶内对象

s3cmd ls s3://test-bucket- 设置桶配额(限制大小 / 对象数)

# 为桶设置配额:最大 10GB,最多 1000 个对象

radosgw-admin bucket quota set --bucket="test-bucket" --max-size=10G --max-objects=1000

# 查看桶配额

radosgw-admin bucket quota get --bucket="test-bucket"- 删除桶

# 空桶直接删除

radosgw-admin bucket rm --bucket="test-bucket" --uid="s3-user1"

# 非空桶强制删除(先删对象再删桶)

s3cmd rm --recursive s3://test-bucket

radosgw-admin bucket rm --bucket="test-bucket" --uid="s3-user1"- 对象(Object)管理

- 上传 / 下载 / 删除对象

# 上传本地文件到桶(s3cmd 方式)

s3cmd put local-file.txt s3://test-bucket/remote-file.txt

# 下载桶内对象到本地

s3cmd get s3://test-bucket/remote-file.txt local-file-download.txt

# 删除桶内对象

s3cmd rm s3://test-bucket/remote-file.txt

# 批量删除桶内所有对象

s3cmd rm --recursive s3://test-bucket/- 查看对象元数据

# 查看单个对象元数据

s3cmd info s3://test-bucket/remote-file.txt

# 服务端查看对象信息

radosgw-admin object info --bucket="test-bucket" --object="remote-file.txt"- 权限与配额管理

- 设置用户全局配额

# 限制用户 s3-user1 最大存储 50GB,最多 5000 个对象

radosgw-admin user quota set --uid="s3-user1" --max-size=50G --max-objects=5000

# 查看用户配额

radosgw-admin user quota get --uid="s3-user1"- 配置桶访问权限(公开 / 私有)

# 设置桶为公有读(所有人可下载对象)

s3cmd setacl s3://test-bucket --acl-public

# 设置桶为私有(仅用户自己访问)

s3cmd setacl s3://test-bucket --acl-private

# 给指定用户授予桶的读写权限

s3cmd setacl s3://test-bucket --acl-grant=write:another-user-uid --uid="s3-user1"- 故障排查常用命令

# 查看 RGW 日志(定位连接/权限错误)

ceph logs --name rgw.node01

# 检查 RGW 存储池状态

ceph osd pool stats default.rgw.buckets.data

# 修复 RGW 元数据不一致

radosgw-admin bucket sync --bucket="test-bucket"